The principles of machine learning were laid down about 50 years ago, but only recently they began to be widely applied in practice. Thanks to the growing computing power, computers first learned to reliably distinguish objects in the image and play Go better than a person, and then to draw pictures according to the description or maintain a coherent dialogue in the chat. In 2021-2022, scientific breakthroughs have also become easily accessible. Anyone can subscribe to MidJourney and, for example, instantly illustrate books of their own writing. And OpenAI has finally unveiled its large language model GPT-3 (Generative Pretrained Transformer 3) to the general public through the ChatGPT service. On the site of the chat.openai.com, anyone can communicate with the bot, so make sure for yourself – the bot confidently maintains a coherent dialogue, explains complex scientific concepts better than many teachers, can artistically translate texts between languages and much, much more.

Illustration generated by Midjourney on request “Gnome with magnifying glass lost among storage servers”

If we simplify the principles of ChatGPT as much as possible, then its language model is trained on a huge volume of texts from the Internet and “remembers” which words, sentences and paragraphs often coexist with each other, what are the relationships between them. With the help of numerous technical tricks and additional rounds of training with live interlocutors, the model is optimized for dialogue. Due to the fact that “everything is on the Internet”, the model is naturally able to maintain a dialogue on a variety of topics – from modern fashion and art history to quantum physics and programming.

Scientists, journalists and enthusiasts continue to find new uses for ChatGPT. On the Awesome ChatGPT prompts website, you can find hints (start phrases, “prompts”), following which, ChatGPT will respond in the style of Gandalf or another literary character, write program code in Python, generate business letters and resumes, and even pretend to be a Linux terminal. With all this, ChatGPT remains just a language model, so all of the above are nothing more than common combinations and connections of words, do not try to find reason and logic in this. Sometimes ChatGPT convincingly presents complete nonsense, for example, refers to non-existent scientific research. Therefore, content from ChatGPT should be treated with caution. Nevertheless, even in its current form, the bot is already useful in many practical processes and different industries. Here are a few examples from the field of cybersecurity.

Writing malicious code

On underground hacker forums, novice criminals report how they tried to write new Trojans using ChatGPT. The bot can write code, and if you clearly describe the functions necessary for the criminal (“save all passwords to file X and send via HTTP POST to server Y”), then you can get the simplest infostyle without programming skills. However, respectable users have nothing to fear. If the code written by the bot is actually used, it will be detected and neutralized by security solutions as quickly and efficiently as all previous malicious programs written by real people. Moreover, if such code is not checked by an experienced programmer, then there is a high probability that there will be subtle errors in the code, logic defects that will make the malware less effective.

In general, while bots can compete only with novice virus writers.

Parsing malicious code

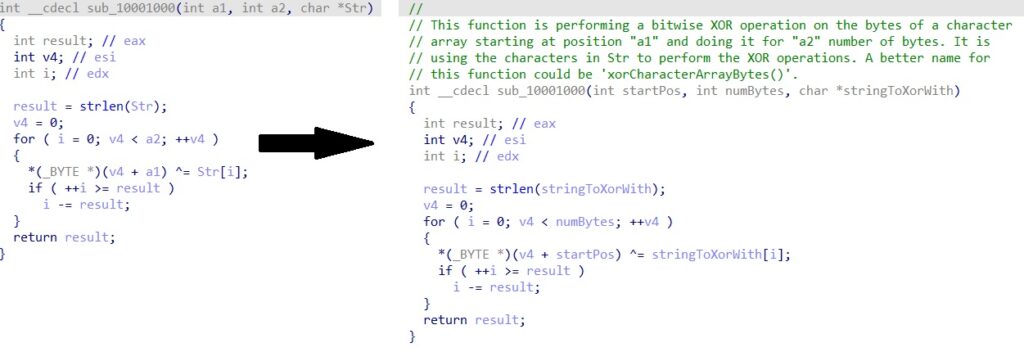

When information security analysts study new suspicious applications, they have to engage in reverse engineering, that is, to disassemble pseudocode or machine code, trying to understand how it works. It will not be possible to completely shift this to ChatGPT, but a chatbot can already quickly explain what a particular code fragment does. Our colleague Ivan Kvyatkovsky has developed a plugin for IDA Pro that does just that. The language model “under the hood” is not exactly ChatGPT, but its “cousin” davinci-003, but this is a purely technical difference. Sometimes the plugin does not work or displays nonsense, but for those cases when it automatically gives the functions reasonable names and identifies the encryption algorithms used in the code and their parameters, it should be put into service. It is especially useful in SOC conditions, where eternally overloaded analysts must spend a minimum of time on each incident, and it will be very useful for them to speed up the analysis.

Vulnerability Scan

A variation of the previous approach is the automated search for vulnerable code. A chatbot “reads” the pseudocode of a decompiled application and designates places there that may contain vulnerabilities. What’s more, the bot offers Python code designed to exploit a vulnerability (PoC). Of course, the bot can make mistakes of all kinds both when searching for vulnerabilities and when writing PoC code, but even in this form, the tool can be useful to both attackers and defenders.

Security Consulting

Since ChatGPT knows that cybersecurity is written about online, its advice on this topic looks convincing. But, as with any advice from a chatbot, you never know what source they are gleaned from, so ten completely legitimate pieces of advice can come across one absolutely delusional one. However, the tips from the screenshot below are all reasonable enough:

Phishing and BEC

Compelling-looking texts are the strengths of GPT-3 and ChatGPT, so automating targeted phishing attacks with a chatbot is probably already happening. The main problem of mass phishing mailings is that they are unconvincing, they have too identical and common text, which does not take into account the identity of the addressee. And spear phishing, when a live attacker manually writes a letter to a single victim, is quite expensive, so it is used only in targeted attacks. ChatGPT will greatly change the balance of power because it allows you to generate compelling and personalized email texts on an industrial scale. True, in order for the letter to contain all the necessary components, you need to give the chatbot a very detailed task.

But serious phishing attacks usually consist of a series of emails, each of which gradually increases the victim’s confidence. And on the second, third and so on emails, ChatGPT will help attackers to really save time. Since the chatbot remembers the context of the conversation, the following long and beautiful emails are generated from a very short and simple hint.

Moreover, it is easy for a chatbot to feed the victim’s response in order to create a convincing continuation of the correspondence in a matter of seconds.

Among the tools that attackers can use is the stylization of correspondence. It is easy for a chatbot that has received a small sample in the desired style to be given the task of writing further in this style. Thus, it is possible to convincingly forge, for example, letters on behalf of one employee, addressing them to another.

Unfortunately, this means that the scale of effective phishing attacks will only grow. And the chatbot will be equally convincing in e-mail, and in social networks, and in instant messengers.

What are the countermeasures? Content analysis experts are actively working on tools that detect chatbot texts. Time will tell how effective such filters will be. In the meantime, we can recommend only two standard tips (vigilance and training on information security) – and one new one. Learn to recognize bot-generated texts. Mathematical properties cannot be recognized by eye, but small stylistic features and slight incoherence still give the robot away. You can play an interesting game “robot or man”, for example, here.

0 Comments